Why DDoS Mitigation Fails: 5 Gaps That Testing Reveals

Companies invest heavily in DDoS mitigation, yet outages still happen—often at the worst possible moment. The problem is rarely the protection technology, but the unseen gaps between deployment and a real attack, where misconfigurations, false assumptions, and untested scenarios quietly accumulate.

Red Button simulation data shows that 68% of identified faults are severe or critical, with an average first-test DDoS Resilience Score (DRS) of just 3.0—well below the recommended 4.5–5.0 baseline for most industries. This article breaks down the five most common gaps behind these failures, and how simulation testing exposes them before attackers do.

Key Takeaways

- DDoS mitigation doesn’t fail because of missing tools—it fails because those tools aren’t tested under real conditions.

- Most environments have blind spots (misconfigurations, untested vectors, and origin exposure) that only surface during an actual attack.

- Application-layer attacks are the biggest gap, often bypassing controls while degrading systems without clear signals.

- Teams play a critical role, and a lack of real-world experience can turn a manageable attack into a prolonged outage.

- Without testing, resilience is assumed rather than measured.

Gap 1: Misconfiguration: Protection Tools Set to Factory Defaults

Most DDoS solutions ship with generic settings — not your architecture

The majority of DDoS mitigation failures come from protection technology measures that were never properly tuned. Out-of-the-box configurations are designed for broad compatibility, not for the specifics of your traffic, your application logic, or your risk tolerance. Rate limits are often too high to avoid false positives. Filtering rules are conservative by default. Thresholds assume “average” behavior that rarely reflects production reality.

Over time, these defaults become embedded into the environment. Teams assume protection is active because the system is running, but no one has validated whether it would actually trigger under attack conditions. In Red Button incident response engagements, it’s common to find mitigation layers still operating on initial deployment settings, even in mature environments with multiple vendors in place.

What testing reveals here

This is where DDoS simulation testing becomes critical. Instead of asking whether protection exists, it tests whether it works by simulating real attack patterns. Testing shows exactly which requests pass through undetected, which thresholds fail to trigger, and how mitigation logic behaves under load.

In one European Central Bank simulation, Cloudflare rate-limiting rules appeared correctly configured on paper. But in a real-life scenario, they were too permissive. An HTTPS POST flood exceeded expected traffic levels but still did not trigger mitigation, allowing the traffic to reach the application layer uninterrupted. There was no alert. No block. No signal to the team.

And that’s the core issue with misconfiguration: it doesn’t show up during normal operations. It only becomes visible when the system is stressed in ways it was never explicitly configured to handle—and by then, it’s too late.

Testing surfaces that gap early, under controlled conditions, provides a clear path to recalibrating thresholds, tightening rules, and aligning protection with actual traffic behavior. This is where DDoS technology hardening becomes actionable, moving from generic defaults to configurations that reflect how the environment actually behaves under attack.

See also: European Central Bank case study

Gap 2: Shared Responsibility Blind Spots: What Your Provider Doesn’t Cover

Cloud and CDN do not provide 100% protection

One of the most persistent misconceptions in DDoS mitigation is the assumption that cloud or CDN providers provide complete protection by default. Services like Amazon Web Services Shield, Microsoft Azure DDoS Protection, or Cloudflare operate at the edge—they are designed to absorb volumetric traffic before it reaches you. But that coverage has clear boundaries.

Origin infrastructure, exposed endpoints, API gateways, and application-layer logic remain the customer’s responsibility. Even more critically, traffic originating within trusted networks (such as the CDN itself) can bypass certain protections entirely.

This is where the gap forms. Organizations see the provider’s protection as “on” and assume they are covered end-to-end. In reality, they are only partially covered—and often don’t know where that coverage stops.

Red Button simulation data clearly shows that 68% of companies believe they are more protected than they actually are, as vendor-level protection is often mistaken for full-stack resilience.

What testing reveals here

Simulation testing makes that boundary visible by running controlled attack scenarios across different entry points and showing how traffic can bypass CDN layers and reach the origin directly. It highlights exposed IPs and attack paths that sit outside the provider’s protection scope.

These are not edge cases—they are common in cloud environments, especially post-migration, where infrastructure evolves faster than security assumptions. Because Red Button operates as an authorized DDoS testing partner for both AWS and Azure, these simulations are executed safely within provider environments, without breaching terms of service or triggering unintended disruption.

Gap 3: Attack Vectors Your Stack Was Never Tested Against

Most stacks are validated against simple attack types — modern attacks are way more sophisticated

Most organizations treat DDoS mitigation as “validated” once it passes a penetration test or a vendor check. The problem is scope. These exercises usually cover a narrow, generic set of scenarios.

But real attacks don’t follow that constraint. They combine techniques across layers: volumetric floods to create noise, protocol abuse to strain network components, and application-layer (L7) attacks to exhaust resources in ways that look legitimate. On top of that, low-and-slow variants are specifically designed to stay below detection thresholds.

When a broader simulation is applied, the gaps become visible. As seen in the earlier European Central Bank example, 2 out of 7 application-layer attack vectors against a critical service were not detected at all. In parallel, a network-layer attack was able to saturate the infrastructure despite correctly configured ACLs.

What testing reveals here

Simulation doesn’t just confirm whether protection exists; it shows how it behaves across different conditions. Some vectors are mitigated cleanly. Others only partially trigger controls, creating performance degradation without a clear signal. And some pass through entirely, because they were never part of what the system was designed or tuned to detect.

As seen in the earlier European Central Bank case, certain application-layer attacks weren’t detected at all, while a network-layer attack saturated upstream infrastructure despite correct configuration. The issue wasn’t that controls were missing—it’s that those scenarios had never been tested in combination.

This is where multi-vector attacks become effective. They are specifically designed to exploit the fact that most mitigation logic is validated in isolation. When multiple vectors hit simultaneously, gaps between layers start to appear—especially in areas that haven’t been exercised before.

Red Button simulation addresses this by expanding the test scope beyond a narrow set of scenarios. Instead of validating against a handful of known attack types, it runs controlled tests using over 100 attack scenarios spanning application, protocol, and network layers, using globally distributed infrastructure (across 30+ countries and up to 300 Gbps).

→ See how Red Button validates your protection with real-world attack simulation.

Gap 4: Layer 7 Underprotection: Application-Layer Attacks Are the Hardest to Catch

Volumetric protection does not stop application-layer exhaustion attacks

Most DDoS mitigation strategies are still heavily weighted toward network-layer protection. L3/L4 defenses are mature, widely deployed, and effective at handling high-volume floods. But that strength can create a false sense of coverage.

Application-layer (L7) attacks operate differently. Instead of overwhelming bandwidth, they target how the application behaves—sending traffic that appears legitimate (HTTP floods, Slowloris, Large file download) in patterns that exhaust server resources over time. From the outside, the traffic often appears legitimate, making it far harder to distinguish from real users.

This is where traditional mitigation logic struggles. Thresholds are set to avoid blocking legitimate traffic, and volumetric scrubbing doesn’t apply when requests are low-volume but high-impact.

What testing reveals here

L7 testing doesn’t usually uncover a dramatic failure. It shows something more uncomfortable: the system is doing exactly what it was configured to do—and that’s the problem.

In simulation, the traffic often goes straight through. It looks legitimate, stays within expected patterns, and doesn’t trip volumetric defenses. But once it hits the application, you start to see the effect. Threads get tied up. Requests stack. Response times stretch. From the outside, nothing looks obviously wrong until performance starts to drop.

What typically comes out of these tests:

- traffic that passes every edge check but still puts real pressure on the application

- rate limits that were set to avoid blocking users, but end up allowing sustained L7 abuse

- a clear disconnect between what the WAF/CDN considers “normal” and what actually exhausts backend resources

- no clear trigger point—just gradual degradation that wouldn’t immediately be flagged as an attack

This is why L7 is consistently underprotected. It doesn’t behave like a classic DDoS event, and most environments aren’t tested under these conditions.

When Red Button runs these simulations, the gap becomes obvious. Not because something breaks instantly, but because you can see how far the current setup can be pushed before it stops protecting the application in any meaningful way.

Gap 5: Team Unpreparedness: How to React When an Attack Happens

DDoS skills gaps turn a manageable attack into an extended outage

Most SOC and NOC teams don’t get much hands-on exposure to DDoS events. So when one does happen, there’s often a pause when people are trying to understand what they’re looking at, whether it’s already being handled, or whether they should intervene at all. It’s common to assume the provider or the automation will handle it, especially in cloud-heavy environments.

The issue is that many attacks sit in that grey area where mitigation is only partial. Traffic is getting through, performance is starting to degrade, but nothing has clearly “failed.” Without experience, teams don’t always know when to step in or what to change. And that hesitation is usually what extends the impact.

Red Button simulation data shows a consistent pattern here. Teams that have already been through this kind of scenario tend to react earlier and more decisively. They adjust thresholds, escalate faster, and coordinate with providers more effectively. The difference is familiarity with how the system behaves under stress.

What testing reveals here

This is one of the few areas you can’t really assess on paper. Simulation effectively turns into a live exercise, and the gaps show up quickly:

- escalation paths that exist but aren’t followed cleanly under pressure

- uncertainty around when to rely on automated mitigation versus stepping in manually

- delays in adjusting rules or thresholds because teams aren’t confident in the impact

- communication breakdowns between internal teams and external providers

None of this is unusual—it’s just rarely tested. That’s why these simulations tend to be revealing. They show not just whether the system can handle the traffic, but whether the people operating it can respond in time and in the right way. In a different bank DDoS test case study, similar gaps were identified in controlled simulations, particularly regarding how teams responded when mitigation was only partially effective.

How to Measure Your Actual Mitigation Posture

Most teams can describe their DDoS mitigation stack in detail. That’s not the same as knowing how it performs. The gap is that almost everything is assessed statically—configs, coverage, vendor capabilities. Very little of it is measured under the kind of conditions that actually matter.

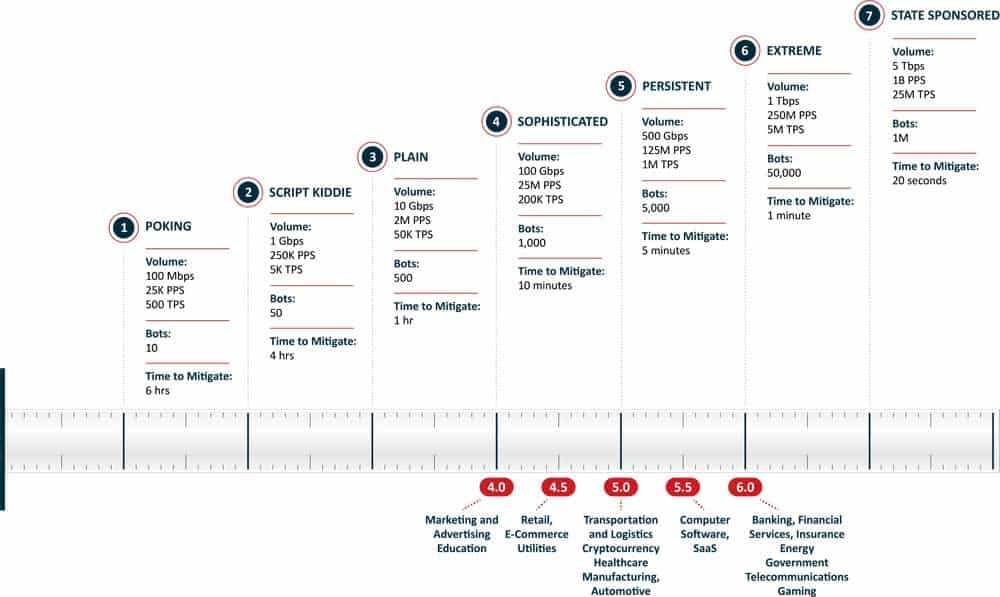

The DDoS Resilience Score is built around that idea. It’s a 1–7 score based on increased sophistication and attack volumes. Our testing results in an objective DRS score, indicating your current protection level and comparing it to where you should be.

What tends to catch people off guard is the starting point. First tests average around 3.0. For most environments, you’d want to be somewhere closer to 4.5–5.0 to feel confident. The gap isn’t coming from anything dramatic—it’s usually a mix of small things: rules that don’t quite trigger, vectors that were never tested, responses that take longer than expected.

Why DDoS Mitigation Gaps Are Getting Harder to Ignore

The Digital Operational Resilience Act (DORA) is very explicit that resilience must be tested, not assumed. DDoS scenarios are part of that expectation. The NIS2 Directive pushes in the same direction: prove that systems can handle disruption, not just that controls exist.

At the same time, the environments themselves have become less predictable.

Cloud hasn’t reduced exposure—it’s changed where it sits. You’ve got origin paths that are easier to reach than people expect and APIs that weren’t part of the original threat model. Most mitigation setups weren’t designed for that level of sprawl. And if you look at recent attack data, today’s threat actors are deliberately combining multiple vectors, especially at the application layer, to create sustained pressure rather than immediate outages. That kind of attack doesn’t always take systems down outright—it slows them, disrupts transactions, and degrades user experience over time.

Summary: The 5 Gaps at a Glance

| Gap | Root Cause | What Testing Exposes |

| Misconfiguration | Default or loosely tuned settings that don’t reflect real traffic patterns | Rate limits that don’t trigger, thresholds set too high, rules that allow attack traffic through unnoticed |

| Shared responsibility blind spots | Overreliance on cloud/CDN providers for end-to-end protection |

Direct-to-origin exposure, internal traffic paths, and attack vectors outside provider coverage |

| Coverage gaps |

Validation limited to a narrow set of attack types |

Entire classes of attack vectors that pass through without detection or mitigation |

| L7 underprotection |

Focus on volumetric (L3/L4) threats over application behavior |

Legitimate-looking HTTP/HTTPS traffic that exhausts backend resources without triggering defenses |

| Team unpreparedness |

Lack of hands-on experience responding to DDoS events |

Slow or unclear escalation, hesitation to act, and missed opportunities to contain the attack early |

Most of the gaps in this article don’t come from missing tools, they come from things that were never exercised: configs that were never pushed, vectors that were never tested, teams that never had to react under pressure. The only way to close that is to run it properly—test the stack as it is today, see where it holds and where it doesn’t, and fix what shows up. Anything else is just assuming it will work when you need it most.

Find out which gaps exist in your mitigation stack. Request a DDoS simulation test →

FAQs

Why does DDoS mitigation fail even with the right tools in place?

Because tools are often misconfigured, partially deployed, or never tested under real attack conditions.

What are the most common gaps in DDoS mitigation?

Misconfiguration, shared responsibility blind spots, limited attack coverage, weak Layer 7 protection, and unprepared teams.

Why are application-layer (L7) attacks a major risk?

They mimic legitimate traffic, making them difficult to detect while gradually exhausting backend resources.

What is the shared responsibility gap in DDoS protection?

Cloud and CDN providers protect the edge, but origin infrastructure, APIs, and application logic remain your responsibility.

How can organizations identify weaknesses in their DDoS defenses?

By running controlled DDoS simulation tests that expose gaps across configurations, coverage, and team response.