DDoS Testing vs Protection: The Missing Layer in Your Defense

Key takeaways

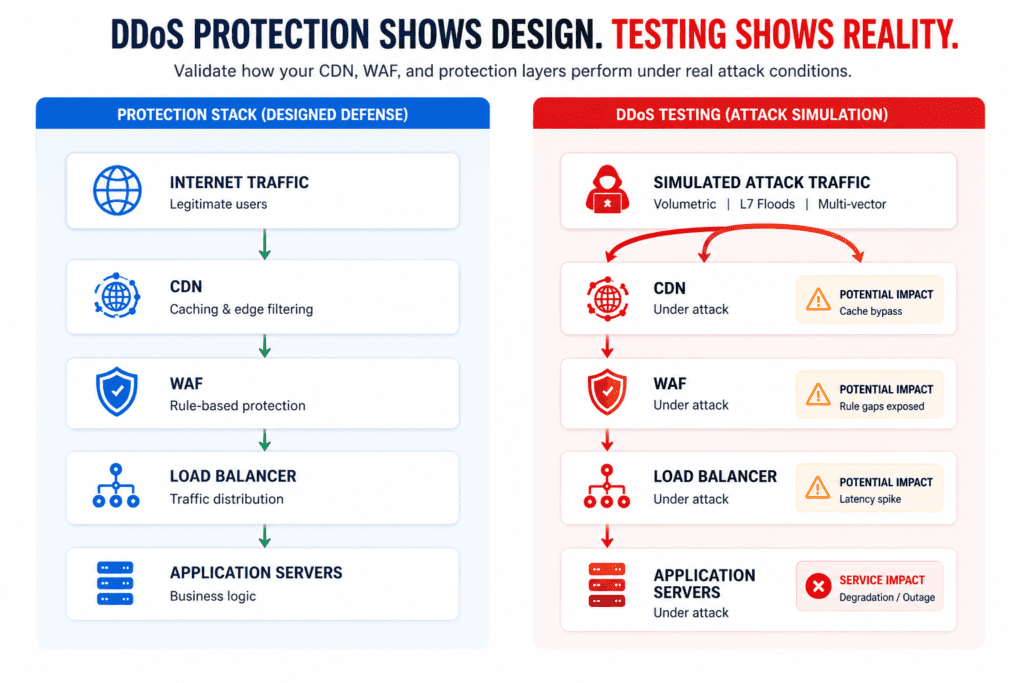

- DDoS protection refers to the tools and architecture deployed to stop attacks (CDNs, WAFs, scrubbing centers, firewall rules) operating continuously in the traffic path

- DDoS testing is a controlled simulation that validates whether those tools actually work under real-world attack conditions

- 68% of protection faults found in Red Button simulations were rated severe or critical in organizations that already had protection deployed

- Deployed protection that has never been tested under real attack conditions is a configuration, not security. Testing without protection in place is a simulation without purpose.

What DDoS Protection Actually Does

DDoS protection tools sit in the traffic path and apply rules, thresholds, and filters when an attack is detected, absorbing, redirecting, or dropping malicious traffic before it reaches the target infrastructure.

Protection stacks are typically built across multiple layers, such as:

- ISP CleanPipe absorbs high-volume floods at the network edge

- CDN and scrubbing centers filter L3/L4 attacks such as UDP floods and SYN floods

- Web Application Firewalls (WAF) operate at L7, inspecting HTTP/S traffic for application-layer abuse

- Rate-limiting rules cap request volumes from specific sources

- Bot management separates legitimate automated traffic from attack infrastructure

The bigger issue is configuration. Protection tools ship with generic defaults: thresholds, rule sets, and filtering logic designed for broad applicability rather than any specific environment. To be effective, those defaults need to be adjusted to reflect an organization’s actual traffic baseline, application behavior, and infrastructure topology.

For example, a rate-limit threshold that works for one environment may be too permissive for another with different traffic volumes or API usage patterns. Ot, a WAF rule set that was accurate at deployment may no longer reflect the attack surface after an architecture change.

What DDoS Testing Actually Does

Where protection is passive, testing is deliberate. Instead of waiting for an attack to occur, a testing team deliberately generates real attack traffic against your live or pre-production environment to find out what your protection stack handles well, and where it breaks down.

The output of a test isn’t a simple pass or fail. It’s a prioritized vulnerability report, including findings ranked by severity, each with specific remediation guidance.

However, the quality of that output depends heavily on methodology. Red Button typically uses a white-box approach, which means the testing team starts by learning the actual architecture: the specific tools deployed, how they’re configured, where traffic enters and exits, and what the normal baseline looks like. Attack vectors are then designed to stress the specific weak points of that environment, rather than running a generic battery of tests against an unknown target.

Since 2014, Red Button has run over 1,500 tests across a wide range of industries and infrastructure types. For the client, the process requires around five hours of involvement in total – enough to be thorough without disrupting normal operations.

Why Having Protection Is Not the Same as Being Protected

There’s an important distinction between having a DDoS protection tool deployed and actually being protected against DDoS attacks. The two aren’t the same thing, and the gap between them tends to show up in three specific areas.

The Configuration Gap

Protection tools don’t configure themselves. Rate-limit thresholds, WAF rules, and geo-blocking logic all need to be calibrated against an organization’s actual traffic baseline: what normal request volumes look like, where legitimate traffic originates, and how the application behaves under load.

When that calibration doesn’t happen, the tool operates on assumptions that may not hold. The European Central Bank experienced this directly: Cloudflare was deployed and running, but rate-limit thresholds had been configured too permissively. An HTTPS POST flood exceeded those thresholds without triggering any mitigation rules. The protection was in place; the configuration didn’t reflect the threat environment.

Addressing these kinds of configuration issues is part of what DDoS technology hardening covers – the process of systematically reviewing and tightening the settings across each layer of the protection stack.

The Coverage Gap

Most protection stacks are validated against a limited set of attack vectors at deployment, typically the most common volumetric and protocol-based attacks. DDoS attack types that fall outside that initial scope are often assumed to be covered, even though they are not tested.

Red Button simulates over 100 attack vectors per engagement. First-time tests regularly surface:

- Vectors the stack was never configured to handle

- Attack types the stack was designed for but misconfigured against

- Gaps introduced by architecture or infrastructure changes post-deployment

The European government agency case study, running an Azure DDoS Protection Plan illustrates the coverage problem clearly. The platform is designed for L3/L4 protection and handles volumetric attacks effectively within that scope. When tested against a TLS reconnection attack (which operates at a different layer), it produced no detection and no mitigation. The product was functioning correctly; it simply wasn’t designed to cover that attack category.

The Shared Responsibility Gap

Cloud-native protection products operate within a defined scope that doesn’t always extend to the customer’s full environment. AWS Shield and Azure DDoS Protection, for example, protect the provider’s infrastructure. What sits outside that boundary, for example, the customer’s origin server, application layer, or any infrastructure beyond the provider’s perimeter, requires separate consideration.

In an HR company case study, Red Button had deployed a host-based WAF on the same server as the application it was protecting. Under DDoS load, the WAF and the application drew from the same pool of CPU and memory resources. As attack traffic scaled up, both became unavailable simultaneously. The organization’s DRS score was 1.5 – significantly below the 4.5–5.0 baseline considered adequate for most industries.

What the Data Shows

Red Button has conducted over 1,500 DDoS simulations since 2014. The findings across that dataset point to a consistent and specific problem.

68% of protection faults identified in those simulations were rated severe or critical. In the context of DDoS mitigation testing, severe means no detection and no mitigation, while critical means partial mitigation only. These weren’t organizations without protection. They had invested in it, deployed it, and in most cases assumed it was working.

The DRS numbers reinforce this. The average resiliency score recorded at the first simulation is around 3.0. For most industries, the recommended baseline is 4.5–5.0. That’s not a marginal gap; it represents meaningful exposure across attack vectors that existing protection either doesn’t reach or hasn’t been configured to handle.

What’s notable about this data is what it doesn’t show. It doesn’t show a pattern of tools malfunctioning or vendors delivering products that don’t work. The protection products themselves are generally functioning as their vendors designed them to. The gap lies elsewhere: in the space between a tool being installed and a tool being properly calibrated, scoped, and validated for the environment it’s meant to protect.

How Testing and Protection Work Together

The two disciplines are not alternatives to each other; DDoS protection validation is what connects them. Protection stops attacks; testing confirms the protection works.

| DDoS Protection | DDoS Testing | |

| Function | Stops attacks in real time | Validates that protection works |

| What it requires | Tools, configuration, architecture | Simulation, expertise, methodology |

| Output | Traffic filtering | Vulnerability report, recommendations |

When to Run a DDoS Test

Knowing how to test DDoS protection effectively starts with understanding that there’s no single universal schedule for DDoS simulation testing, but there are clear triggers that should prompt one. At a minimum, testing should be conducted annually because attack vectors evolve, and a simulation from 18 months ago reflects a threat landscape that no longer exists. Beyond that baseline, the following situations each warrant a test in their own right:

- After deploying a new protection tool or architecture. Initial deployment is when configuration gaps are most likely to exist and least likely to have been caught.

- After a cloud migration. Moving to AWS, Azure, a hybrid environment, or between providers changes the protection scope, the shared responsibility boundary, and the attack surface.

- After a significant architecture change. A new CDN, WAF, or API layer alters how traffic flows through the environment and how the protection stack responds to it.

- Before a high-risk period. Product launches, peak trading seasons, and regulatory audits all represent windows where availability is critical and the cost of a successful attack is highest.

- After a real DDoS incident. A post-incident test serves two purposes: understanding what failed and confirming that the remediation actually fixed it.

What DDoS Testing Is Not

DDoS protection testing is sometimes conflated with other security practices. The distinctions are worth being clear on.

It is not a penetration test. Unlike penetration testing, which covers a broad attack surface, DDoS defense testing focuses exclusively on availability and resilience under traffic-based attacks. Red Button simulates over 100 DDoS-specific vectors; a typical penetration test might cover five to ten. The two practices address different threat categories and neither substitutes for the other.

It is not a vendor self-assessment. CDN and cloud providers sometimes offer basic validation of their own layer as part of an onboarding or support process. That is not independent testing. It covers only the provider’s layer, under conditions the provider controls, and says nothing about how the full stack performs end-to-end.

It is not a one-time exercise. A single test produces an accurate picture of the environment at a specific point in time. Infrastructure changes, new attack vectors emerge, and configurations drift. A test from 2 years ago doesn’t reflect your environment today. For organizations that need continuous validation rather than periodic snapshots, DDoS 360 is designed for that purpose.

Find out what your protection stack actually stops. Request a DDoS simulation test →

FAQs

What’s the difference between DDoS testing and DDoS protection?

DDoS protection blocks attacks in real time using tools like CDNs and WAFs, while DDoS testing simulates attacks to verify whether that protection actually works.

Do I need DDoS testing if I already have protection in place?

Yes. Deployed protection without testing may be misconfigured or incomplete, leaving critical gaps that only real attack simulations can reveal.

How often should DDoS testing be performed?

At least annually, and after major changes such as cloud migrations, new security tools, architecture updates, or before high-risk business periods.

Can DDoS testing disrupt my live environment?

When done correctly (e.g., controlled, white-box simulations), testing is designed to minimize disruption while safely identifying weaknesses.

What does a DDoS test actually deliver?

A DDoS test provides a prioritized vulnerability report, remediation guidance, and a resiliency score that measures how well your protection performs under attack.